In the digital economy of 2026, information has a “perishable” value. Data collected an hour ago is history; data collected a minute ago is a report; but data processed right now is an opportunity. This shift from batch-based analysis to instantaneous insight is powered by Real-time Data Processing.

From autonomous high-frequency trading to life-saving medical alerts, the ability to ingest, analyze, and act upon data streams the moment they are created is the new baseline for enterprise success. This article explores the architecture, technologies. and strategic trends defining the world of Real-time Data Processing.

1. What is Real-time Data Processing?

Real-time Data Processing is the continuous ingestion and immediate transformation of data as it is generated. Unlike “Batch Processing,” where data is collected over hours or days and processed in bulk, real-time systems deal with “Data in Motion.”

The Latency Spectrum

In 2026, we categorize real-time systems into two distinct types:

-

Hard Real-time: Systems where a delay of even a few milliseconds constitutes a total system failure (e.g., anti-lock braking systems or pacemaker regulators).

-

Soft Real-time: Systems where low latency is critical for value but not life-threatening (e.g., personalized website recommendations or live social media feeds).

2. The Core Architecture of Real-time Systems

To achieve the speeds required for Real-time Data Processing, organizations have moved away from traditional relational databases toward “Stream-First” architectures.

Event Stream Ingestion

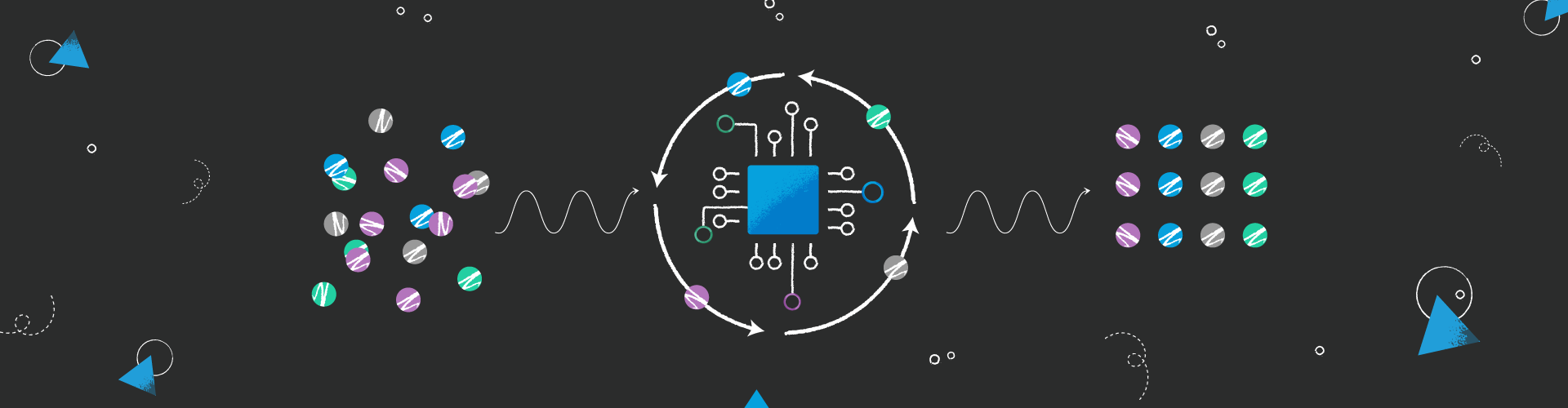

The first step involves capturing “events” from millions of sources—IoT sensors, user clicks, or financial transactions. Platforms like Apache Kafka and Amazon Kinesis act as the central nervous system, buffering these events for immediate consumption.

Stream Processing Engines

Engines such as Apache Flink and Spark Streaming allow developers to run complex SQL-like queries on data streams while they are still in flight.

-

ALT Text: A technical diagram of a Real-time Data Processing pipeline from ingestion to action.

-

Description: A professional flowchart illustrating the movement of data from IoT sensors into an event broker like Kafka, followed by processing in Apache Flink and outputting to a real-time dashboard.

3. Why 2026 is the Era of “Edge-to-Stream” Integration

The biggest trend in Real-time Data Processing this year is the convergence of the “Edge” and the “Stream.”

Reducing the Physics of Latency

Even at the speed of light, sending data to a central cloud and back takes time. By utilizing Edge Computing Trends, companies now perform the first layer of real-time processing directly on the device.

AI-Powered Streaming

In 2026, we no longer just process data; we predict it. Real-time Data Processing now integrates Predictive Analytics Models directly into the stream. For example, a credit card processor doesn’t just check if you have enough balance; it uses AI to predict if the current transaction fits your behavioral pattern in under 50 milliseconds.

4. Key Use Cases: Real-time Data in Action

Real-time Data Processing is transforming every vertical by enabling “Instant Response” capabilities.

Finance: High-Frequency Trading (HFT) and Fraud

In the world of HFT, a microsecond is the difference between a million-dollar profit and a loss. Real-time systems analyze global market feeds to execute trades at speeds invisible to the human eye. Simultaneously, Fraud Detection Models analyze transaction metadata to block stolen cards at the point of sale.

Healthcare: Remote Patient Monitoring

Wearable devices now process heart rate and oxygen levels in real-time. If a patient shows signs of an impending cardiac event, the Real-time Data Processing layer triggers an automatic emergency alert to the nearest medical facility, significantly improving survival rates.

Retail: Dynamic Pricing and Personalization

Smart shelves and e-commerce platforms now adjust prices in real-time based on current demand, stock levels, and even the local weather. If a customer lingers on a product page, the system can trigger a real-time “limited time offer” to close the sale.

-

ALT Text: Real-time Data Processing application in a smart retail environment.

-

Description: A visual of a futuristic grocery store where electronic shelf labels update instantly as the real-time analytics engine calculates optimal pricing.

5. Overcoming the Challenges of Real-time Systems

Processing data at the speed of thought is not without its difficulties.

Data Consistency and “Exactly-Once” Processing

In a distributed system. a message might be sent twice or arrive out of order. Real-time Data Processing frameworks must implement “Exactly-Once” semantics to ensure that a financial transaction isn’t counted twice or a sensor alert isn’t missed.

Scalability and “Backpressure”

During a sudden spike in data (like a Black Friday sale or a viral social media event). the system must handle “Backpressure”—the ability to slow down ingestion so the processing engine isn’t overwhelmed and doesn’t crash.

The “Cost of Now”

Real-time infrastructure is significantly more expensive than batch processing. Organizations must perform a Step-by-Step AI Implementation audit to determine which data truly needs to be processed in real-time and which can wait for the nightly batch.

See also

- The Efficiency Revolution: A Definitive Guide to Top Productivity AI Software in 2026

- Beyond the Black Box: The Ultimate Guide to Neural Network Optimization in 2026

6. The Role of Vector Databases in Real-time AI

For Real-time Data Processing to support Generative AI, it needs a new kind of storage. Vector Databases allow real-time systems to perform “Similarity Searches.” This enables an AI agent to look at a live data stream and instantly find the most relevant historical context to provide an accurate answer.

7. Security and Privacy: Governance at Speed

In 2026, Data Governance Frameworks must be as fast as the data they protect.

-

Real-time Masking: Sensitive PII (Personally Identifiable Information) is automatically masked or anonymized. while it is in the stream, ensuring that downstream analytics see the trends without seeing the private details.

-

Intrusion Detection: Real-time systems monitor network traffic patterns to identify and neutralize cyberattacks (like DDoS or SQL injections) the moment they begin.

-

ALT Text: Real-time security and data governance in a streaming environment.

-

Description: A conceptual visualization of a cybersecurity layer filtering and protecting data packets as they move through a real-time processing hub.

8. Hardware Performance for Streaming

To achieve sub-millisecond latency, Real-time Data Processing requires specialized hardware.

-

In-Memory Processing: Storing the “Working Set” of data in RAM (or specialized HBM3 memory) rather than on disk.

-

FPGA and GPU Acceleration: Offloading heavy mathematical transformations from the CPU to Specialized Hardware to handle millions of events per second without breaking a sweat.

9. The Future: From Real-time to “Predictive-Time”

As we move toward 2027, the trend is shifting toward Predictive-Time Processing. This involves using AI to “hallucinate” the next few seconds of a data stream based on current momentum. This allows systems to begin preparing a response before the data even arrives, effectively achieving “Negative Latency.”

10. Conclusion: The Competitive Edge of Immediacy

We are no longer living in a world that waits. Real-time Data Processing has transformed from a high-tech luxury into a survival requirement. Whether it is responding to a customer’s click, a sensor’s vibration, or a market’s fluctuation, the speed of your response is the ceiling of your success.

By investing in stream-first architectures, integrating AI at the edge, and maintaining a robust governance framework, your organization can move beyond analyzing the past and start shaping the present. In 2026, the future belongs to those who can process it in real-time.