The ability to see is one of the most complex biological functions ever evolved. For decades, replicating this ability in machines was considered the “Holy Grail” of Artificial Intelligence. In 2026, we have moved past simple image recognition. Computer Vision has evolved into a sophisticated cognitive system capable of understanding context, predicting movement, and perceiving 3D depth with greater precision than the human eye.

From autonomous urban air mobility (flying taxis) to real-time robotic surgery, Computer Vision is the primary sensory organ of the modern digital world. This article provides an exhaustive exploration of the technologies, architectures, and ethical considerations defining this field today.

1. What is Computer Vision?

Computer Vision is a field of Artificial Intelligence that enables computers and systems to derive meaningful information from digital images, videos, and other visual inputs—and take actions or make recommendations based on that information.

The Evolution: From Pixels to Patterns

In its infancy, Computer Vision relied on “Feature Engineering,” where humans manually told computers to look for edges or specific colors. Today, powered by Neural Network Optimization, models use Deep Learning to “learn” features automatically through exposure to billions of images, identifying complex patterns that are invisible to human observers.

2. Core Technologies Powering Modern Vision

To understand how Computer Vision works in 2026, we must look at the foundational algorithms that process visual data.

Convolutional Neural Networks (CNNs)

CNNs remain the workhorse of the industry. By applying various “filters” to an image, a CNN can identify low-level features (lines and dots) in early layers and high-level concepts (faces, cars, or tumors) in deeper layers.

Vision Transformers (ViT)

Originally designed for text (Natural Language Processing), Transformers have revolutionized Computer Vision. Unlike CNNs, which look at local pixel neighborhoods, Vision Transformers look at the image as a whole, allowing the model to understand global relationships between objects in a scene.

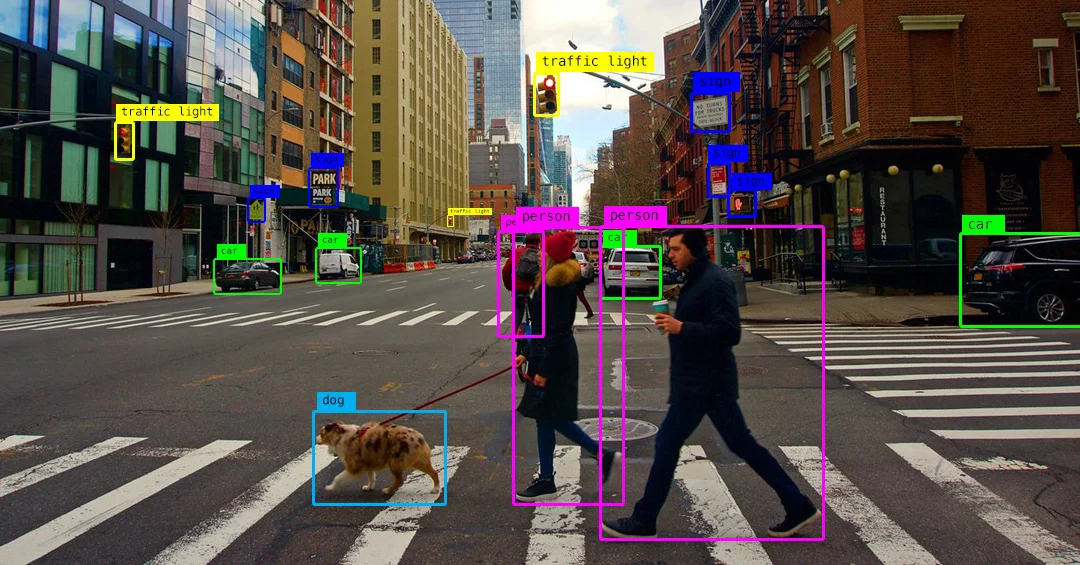

Image Segmentation: Semantic vs. Instance

-

Semantic Segmentation: Categorizing every pixel in an image (e.g., “this is road,” “this is sky”).

-

Instance Segmentation: Distinguishing between individual objects of the same type (e.g., “this is car #1,” “this is car #2”).

-

ALT text: Semantic and instance segmentation visualization in a Computer Vision system.

-

Description: A technical comparison showing how an AI differentiates between general categories and specific individual objects within a complex street scene.

3. Computer Vision in 2026: The Rise of 3D Perception

The biggest breakthrough in 2026 is the transition from 2D image analysis to 3D spatial awareness.

Neural Radiance Fields (NeRFs) and Gaussian Splatting

These technologies allow Computer Vision systems to turn a few 2D photos into a fully navigable, photorealistic 3D scene. This is a cornerstone for the Digital Twin industry, allowing architects and engineers to visualize real-world sites with millimeter precision.

LiDAR and Stereo Vision Integration

Modern autonomous systems no longer rely on cameras alone. By fusing visual data with LiDAR (Light Detection and Ranging), Computer Vision models create “Depth Maps” that allow robots to navigate complex, crowded environments without collisions.

4. Key Industrial Applications

Computer Vision is no longer a laboratory curiosity; it is a multi-billion dollar industrial utility.

Healthcare: Diagnostic Imaging

AI models now assist radiologists by highlighting anomalies in MRIs and CT scans that might be too subtle for a tired human eye. In 2026, AI in Healthcare has moved toward “Predictive Diagnostics,” where vision systems identify early-stage cellular changes before they become visible tumors.

Retail: The Frictionless Store

Following the “Just Walk Out” trend, retail environments use Computer Vision to track which items a customer picks up. This requires advanced “Pose Estimation” to understand human gestures and ensure that the correct item is charged to the correct virtual cart.

Agriculture: Precision Farming

Drones equipped with multispectral cameras fly over crops to identify pest infestations or nutrient deficiencies. By using Computer Vision, farmers can apply pesticides only to the affected plants, reducing chemical usage by up to 60% and supporting Sustainable Tech Innovation.

-

ALT text: Precision agriculture drone utilizing Computer Vision for crop monitoring.

-

Description: A visual showing an aerial view of a farm with a digital overlay highlighting areas of high and low crop stress detected by AI.

See also

- The Comprehensive Guide to Agentic AI Systems: The Next Frontier of Autonomy

- The Efficiency Revolution: A Definitive Guide to Top Productivity AI Software in 2026

5. The Hardware Revolution: NPUs and Vision at the Edge

In 2026, we don’t send visual data to the cloud for processing; we process it “At the Edge.”

The Power of the NPU

Modern smartphones and industrial cameras are equipped with Neural Processing Units (NPUs). These chips are optimized for the massive parallel mathematics required for Computer Vision. This enables Real-time Data Processing for features like FaceID or gesture control without draining the device’s battery.

Event-Based Cameras (Neuromorphic Vision)

Inspired by the human eye, these cameras only record changes in brightness for individual pixels. They don’t capture “frames” like a traditional camera; they capture a continuous stream of movement, allowing for ultra-low latency and incredibly high dynamic range.

6. Challenges: Data Privacy and Algorithmic Bias

As Computer Vision becomes ubiquitous, the ethical stakes have never been higher.

-

Facial Recognition Ethics: The use of vision systems for surveillance remains a highly debated topic. Many regions are adopting strict Data Governance Frameworks to ensure that facial data is used only with explicit consent.

-

Dataset Bias: If a Computer Vision model is trained primarily on data from one demographic, its accuracy will drop significantly when applied to others. In 2026, “Fairness Audits” are a standard part of Step-by-Step AI Implementation.

7. Generative Vision: Creating from Sight

The intersection of Computer Vision and Generative AI has led to “Multimodal Models.”

Visual Question Answering (VQA)

Modern AI can “see” a photo and answer complex questions about it. “Does this bridge look structurally sound?” or “How many calories are in this meal?” These systems use a “Vision Encoder” to turn images into mathematical vectors that a Large Language Model can understand.

Synthetic Data Generation

To train vision models for rare events (like a car crash or a rare medical condition), developers use Generative AI to create hyper-realistic “Synthetic” training data. This solves the “Data Scarcity” problem and speeds up Automated ML (AutoML) workflows.

-

ALT text: Synthetic data generation for Computer Vision training.

-

Description: A grid of hyper-realistic, computer-generated street scenes used to train AI models for various weather and lighting conditions.

8. Implementing Computer Vision: A Roadmap for Businesses

For organizations looking to deploy Computer Vision in 2026, the strategy is clear:

-

Define the Visual Objective: Is it for security, quality control, or customer experience?

-

Optimize the Environment: Lighting and camera angles are 50% of the battle.

-

Choose the Deployment Model: Use Low-Code AI Development platforms for rapid prototyping, but switch to custom-tuned models for high-performance needs.

-

Monitor for Drift: Visual environments change (seasonal lighting, new uniforms). Continuous retraining is essential for maintaining accuracy.

9. The Future: From Sight to Understanding

As we look toward 2027, the trend is “Scene Understanding.” Future Computer Vision systems won’t just say “There is a man with a hammer.” They will understand the intent: “A man is about to begin a construction task.” This leap from recognition to reasoning will define the next generation of robotics.

10. Conclusion: Seeing is Believing

Computer Vision has transformed from a science fiction dream into a foundational pillar of the global economy. It is the bridge between the physical and digital worlds, allowing machines to navigate our reality with a level of awareness that was previously unimaginable.

Whether you are an engineer building the next autonomous drone or a business owner looking to optimize your warehouse, understanding the power of Computer Vision is no longer optional. The machines are watching—and for the first time, they truly understand what they see.